In the not-so-distant past, Alexa, Amazon’s voice assistant, was the belle of the ball. From big-name pizza chains to leading ride-hailing services, countless companies scrambled to launch Alexa “Skills” in a bid to ride the wave of this revolutionary technology. The campaign generated massive attention, but few, if any, of the investing brands saw a meaningful return on their investment.

In reality, the average user’s interactions with Alexa were rather pedestrian. Requests such as “Alexa, book me a spa session” were notably absent from the majority of user interactions. Instead, users favored simple commands like “Alexa, what time is it?” or “Alexa, set a timer for 10 minutes.” This left many companies, having anticipated a windfall from their investment in Alexa, with products that functioned as little more than “glorified clock radios.”

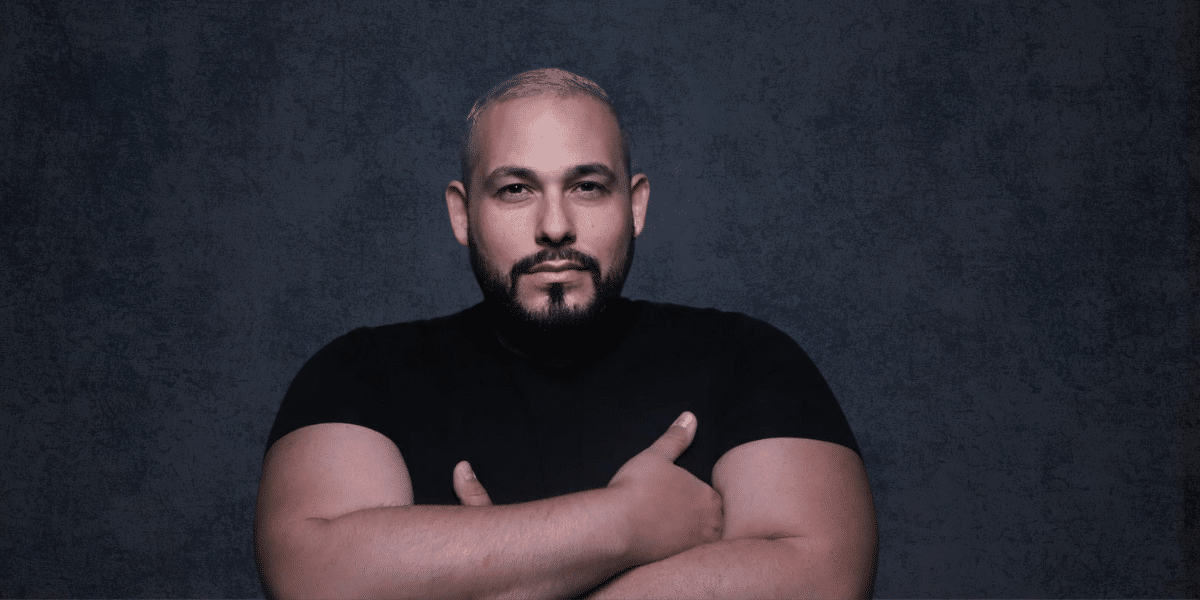

As noted by Rob LoCascio, the next frontier of interaction is now being pioneered by generative AI and large language models (LLMs), which are expected to surpass rules-based assistants like Alexa and Siri. On par with the creation of the personal computer, many believe generative AI’s influence on business will be momentous. However, to avoid repeating the mistakes of previous unsuccessful AI implementations like Alexa, it’s crucial to heed the lessons learned.

The Perils of Misaligned Models and Data

The cornerstone of AI applications lies in two elements: the data model and the dataset. These form the framework within which AI generates results. While recent high-profile releases like ChatGPT have brought models into the limelight, without the correct fusion of models and datasets, both businesses and customers will fall short of their desired outcomes.

The efficacy of generative AI is intrinsically linked to the alignment of its underlying model with the data it’s fed. Suppose you’re part of a healthcare organization aiming to provide personalized COVID safety recommendations based on the locale of your customers. You’ve chosen the optimal model, confident in its ability to generate information in an unbiased tone, and it’s integrated seamlessly with your backend systems to prompt appointment bookings.

Yet, if you pull your COVID-related information from the cacophony of the public internet, you’re setting yourself up for failure. Amidst the flurry of misinformation and noise concerning COVID online, your selected dataset fails to align with the model you’ve chosen to communicate with your audience.

This disconnect mirrors the downfall of Alexa’s Skills. None of the brands leveraging Alexa’s platform found success because the model — Alexa — was, and still is, fundamentally designed to bolster Amazon’s business. The focus of the model was never to aid the promotion of businesses outside the Amazon ecosystem. Consequently, the objectives of restaurants, transportation services, and their customers were never truly achievable with Alexa.

As we usher in the era of generative AI, the cautionary tale of Alexa underscores the importance of aligning models and datasets. Doing so ensures that AI serves its intended purpose — to meet the specific needs and goals of businesses and their customers alike.